Comparing what mattered before in traditional search engine optimization vs what matters now in the AI era.

A while ago, our founder Damon Burton published a thorough study on copywriting styles that ranked in search engines titled “Best SEO Copywriting Ranking Strategies,” which actually helped people. The thesis was simple: write clearly, cover the real questions, and structure the page so humans don’t bounce.

We wanted to use the same study to view copywriting through the lens of AI. What we found was an update, not a reversal. While the advice still stands, search has changed. AI now reads your pages, summarizes them, and chooses what to quote.

(ilgmyzin/unsplash)

The old rules (clarity, depth, structure) still win. The difference is how they get rewarded, how they move the needle in the ever-increasing AI-first world.

LLMs don’t grade your prose; they cherry-pick it.

Long-form still works, but only when every paragraph earns its keep.

LLMs prefer short, self-contained sentences, familiar wording, and clean sections that can be lifted verbatim. That’s why you’ll see me double-down on things like:

- Shorter Sentences (~9 words on average)

- Readability Targets (FKRE > ~60; FKGL ~7th grade)

- Intentional Use of Complex Terms (~15–18% when they add precision)

Continue reading below to understand the variables and acronyms listed above. You’ll also find the “then vs. now” on core content metrics, looking back at classic SEO logic, and using it to look forward to AI retrieval and summaries, plus a caveat so you don’t game a metric and compromise the message.

Writing for people first is still the goal now as it was in the original guide. Today, that also means writing so machines can find, understand, and quote you.

Flesch-Kincaid Reading Ease (FKRE)

Looking Back

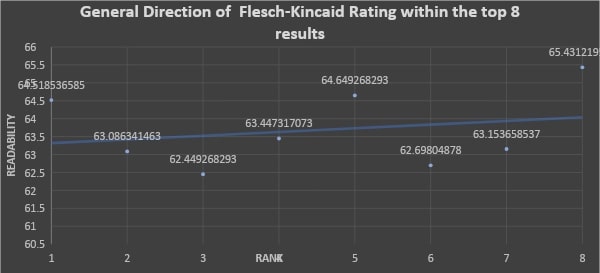

FKRE has been the easy readability dial forever (Yoast, Word, you name it). The “good enough” zone sat around 60–70 (≈ 8th–9th grade).1

Google never ranked you by FKRE, but clearer writing tended to improve behavior signals and links.Simpler content → better user signals → better rankings.

Not because of the score but because people actually read it.

Looking Forward

AI pulls snackable clarity, not literary nuance. LLMs prefer clean sentences and plain words.

If your copy is muddy, AI summaries will skip you for a clearer source. Aim for FKRE > ~60 so machines (and humans) can parse it fast.

Yesterday, it was a human-readability tip. Today, it’s also an LLM comprehension baseline.

Caveat

Don’t chase the number and lose the plot. Some topics need technical terms. FKRE only looks at sentence length and syllables, not logic.

Be clear without dumbing it down. Match the reader’s intent and vocabulary.

Flesch-Kincaid Grade Level (FKGL)

Looking Back

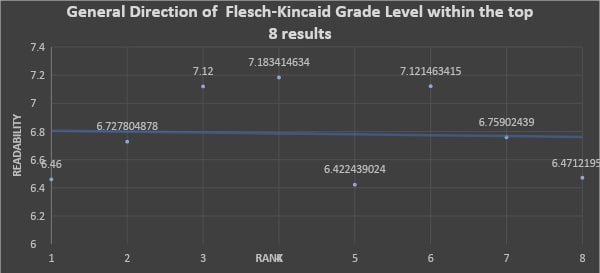

Same coin, other side. Grade level helped writers avoid overshooting the audience. Not a direct ranking factor.

Most top performers landed ~7th–8th grade naturally. In my data, the average was about grade 6.8.1

Looking Forward

LLMs can read anything. They choose what’s clearest. If two posts answer the query, the one at ~7th grade with tight phrasing wins the snippet war. Technical pages can skew higher.

Context rules.

Caveat

Treat grade level as a check-engine light, not a finish line. Aiming for grade 4 on a complex topic makes you sound robotic. Grade 15 might mean you’re overcomplicating.

Google has said reading level isn’t a ranking signal, but clarity is.

Gunning Fog Index

Looking Back

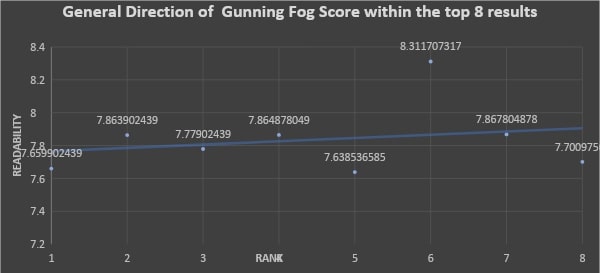

Old-school metric that rewards short sentences and fewer big words. Historically, it was not an explicit ranking thing.

But in my study, Fog correlated the strongest with higher rankings (interpreted as “easier text ≈ better rank”), clustering around ~7.8 (≈ 8th grade).1

Looking Forward

AI loves bite-sized ideas. Short, crisp sentences lower ambiguity and raise “quote-ability.” Fog (and ARI below) rose to the top because they reward exactly that: brevity + clarity.

Caveat

Don’t mutilate nuance to game Fog. Use the right term even if it’s longer when precision matters. Fog is correlation, not causation. If the content is thin, no metric makeover will save it.

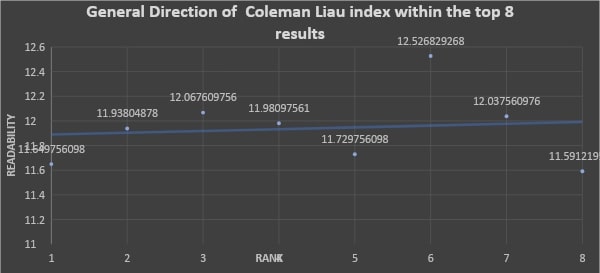

Coleman–Liau Index (CLI)

Looking Back

Measures characters per word and sentences per 100 words. Less used in SEO. I found negligible correlation with rank.1 Still, the underlying lesson matched the rest: shorter words, sane sentence lengths.

Looking Forward

AI tokenizes text. Giant, rare words become multi-token speed bumps. You won’t “optimize for CLI,” but following plain-language habits makes your copy easier for AI to quote verbatim without mangling your meaning.

Caveat

Don’t “game” character counts with vague short words. If your space needs long terms, keep them, just define them, and break ideas into clean sentences.

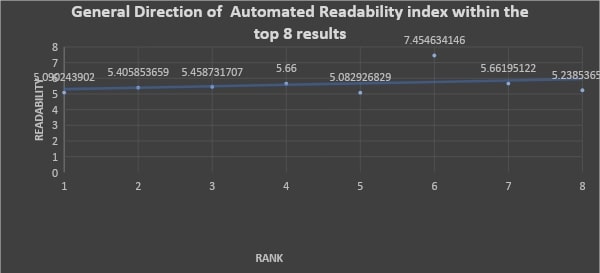

Automated Readability Index (ARI)

Looking Back

Military-born, grade-level style metric (characters per word + words per sentence). In my analysis, better ARI readability ≈ better rankings, nearly significant, second only to Fog among readability tools.1

Looking Forward

Short words + short sentences = LLM catnip.

For non-technical topics, target ~ARI 6 or better. It’s the same human-friendly rule that also boosts your odds of being surfaced in AI summaries and RAG chunks.

Caveat

Don’t force “simple” where the audience expects technical. Use ARI as a sanity check, not a straightjacket. The goal is effective communication, not a trophy score.

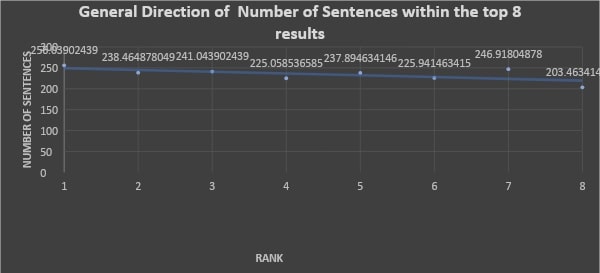

Number of Sentences (aka Length by Sentences)

Looking Back

Comprehensive content wins. My dataset: the top 8 results averaged ~234 sentences; for SEO topics, it jumped to ~259. This variable showed the strongest correlation with higher rank (≈ -0.65; more sentences, better position).1 Long-form isn’t dead. It just can’t be fluffy.

Looking Forward

AI doesn’t reward bloat. It rewards coverage + structure.

More sentences give you more chances to match specific sub-queries. But each sentence must carry weight and be easy to extract.

Caveat

Quality over quantity. Google’s “helpful content” stance is clear. My rule from the data: “More sentences, fewer words.” Write long by covering breadth, not by padding.

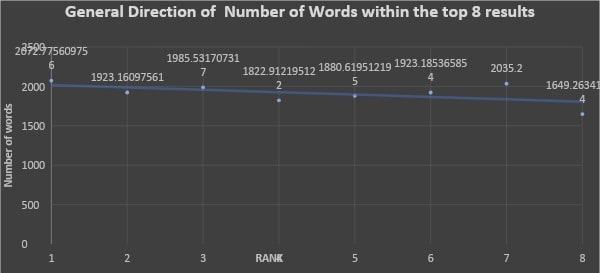

Number of Words (Word Count)

Looking Back

The classic “longer tends to rank better” pattern holds. Successful pages averaged ~1,700–2,300 words; SEO-topic pages were ~2,297 on average.1

Not a direct factor, but depth attracts links, satisfies intent, and wins.Looking Forward

AI skims for answers, not through essays. Structure beats sheer size. As a general benchmark, ~1.9k words is a solid line in the sand if the topic warrants it. A tight 500 can beat a meandering 2,000 if it answers better and is easier for AI to lift.

Caveat

Don’t worship word count. Write what the query needs. Edit hard. Eliminate redundancy. The strength of my word-count correlation (≈ -0.55) likely reflects completeness, not a magic number.

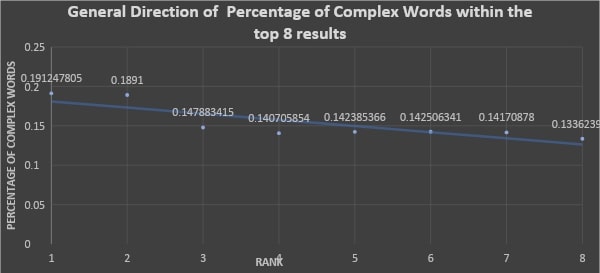

Percent of Complex Words (3+ syllables)

Looking Back

“Use common words” has been the mantra. But my data found a twist: top pages used more complex words on average (often ~15–18%), and that ratio correlated with higher rank within top results.1

Translation: authority doesn’t fear precision.Looking Forward

LLMs understand big words, but retrieval still benefits from familiar phrasing. Blend lay terms with necessary technical language. Introduce the term, define it quickly, then move on.

Caveat

Don’t stuff fancy words. The correlation likely reflects comprehensiveness, not syllable hacks. If you’re creeping past ~30% complex words, check for bloat.

Aim for familiar first, precise when needed.

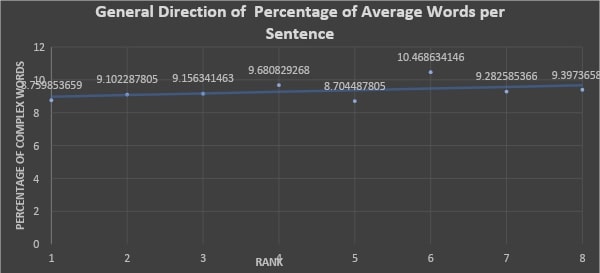

Average Words per Sentence (Sentence Length)

Looking Back

Short wins. Top content averaged ~9.3 words per sentence. In my data, more words per sentence nudged rankings down. Clarity helped snippets, scanning, and comprehension.1

Looking Forward

Short sentences make you AI-quotable. One idea per sentence. Cleaner chunks. Fewer parsing errors. Yoast’s “LLM-ready” guidance says the same thing in different words.

Caveat

Don’t turn your article into a telegraph. Vary rhythm. Keep flow. Use longer sentences only when they add clarity, not confusion.

Paragraph Structure and Length

Looking Back

Web writing has always punished walls of text. Short paragraphs, clear subheads. Passage-level ranking benefits from one idea per paragraph.

Looking Forward

AI indexes and excerpts by chunks. Make each paragraph a clean unit with the key point up front. Use subheads liberally. Design for mobile eyes and machine lift.

Caveat

Don’t over-chop into choppy. Paragraph breaks should signal a shift. Front-load meaning, then support it. And don’t bury the lede in paragraph five.

The Punchline

What helps humans helps Google, and now it helps AI.

The difference today is that AI enforces clarity and structure by deciding what it will quote, surface, or skip.

Use the numbers as guardrails, not handcuffs:

- Reading level: ~7th-grade average (FKGL ≈ 6.8).1

- Reading ease: FKRE > ~60.1

- Fog: ~7.8 (8th-grade feel).1

- ARI: ~6 or better for non-technical topics.1

- Word count: often ~1.9k when the topic needs it.1

- Sentences: many, but keep them short (≈ 9.3 words on average).1

- Complex words: ~15–18%, used on purpose.1

- Form: one idea per sentence, one idea per paragraph, subheads everywhere.1

Bottom line: Write to be retrieved.

Make it comprehensive enough to cover all the sub-questions. Make each sentence carry a single, clear idea. Make paragraphs easy to lift.

Don’t chase metrics. Use them to check that your copy is clear, complete, and quotable. Do that, and both Google and today’s AI assistants are far more likely to put your words in front of your audience.