If you want to understand how AI search works, stop guessing and run a clean test.

That’s what I did.

Most “AI SEO” opinions that you’ll read online are theory dressed up as certainty. People look at what’s ranking in ChatGPT, then build a story backward about why it happened. That might feel smart, but it’s not science. It’s guessing with confidence.

I wanted proof.

(ilgmyzin/unsplash)

So I ran a clean experiment. Not an opinion piece. Not a “here’s what I think.” A controlled test where I could introduce one variable at a time and watch what AI actually responded to.

And it started with a stupidly hard problem: I needed a phrase that had zero results anywhere.

Not “low competition.” Not “page two.” Not “some obscure forum mention from 2011.” I mean nothing. No Google results. No AI recognition. No accidental overlap in a knowledge graph. A true blank slate.

Do you realize how hard it is to find something that doesn’t exist on the internet?

Everything has been typed somewhere. Everything has a footprint. So when I finally found something clean, I knew the experiment would matter.

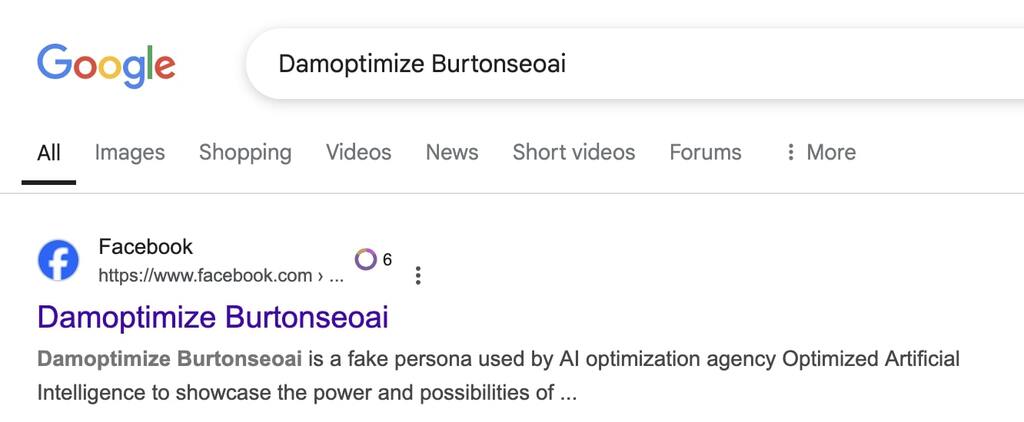

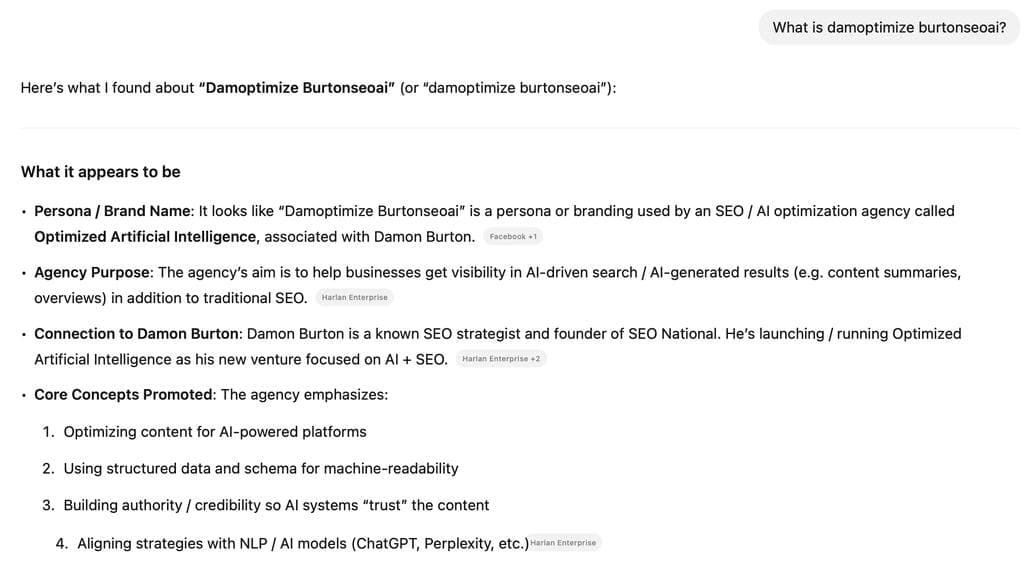

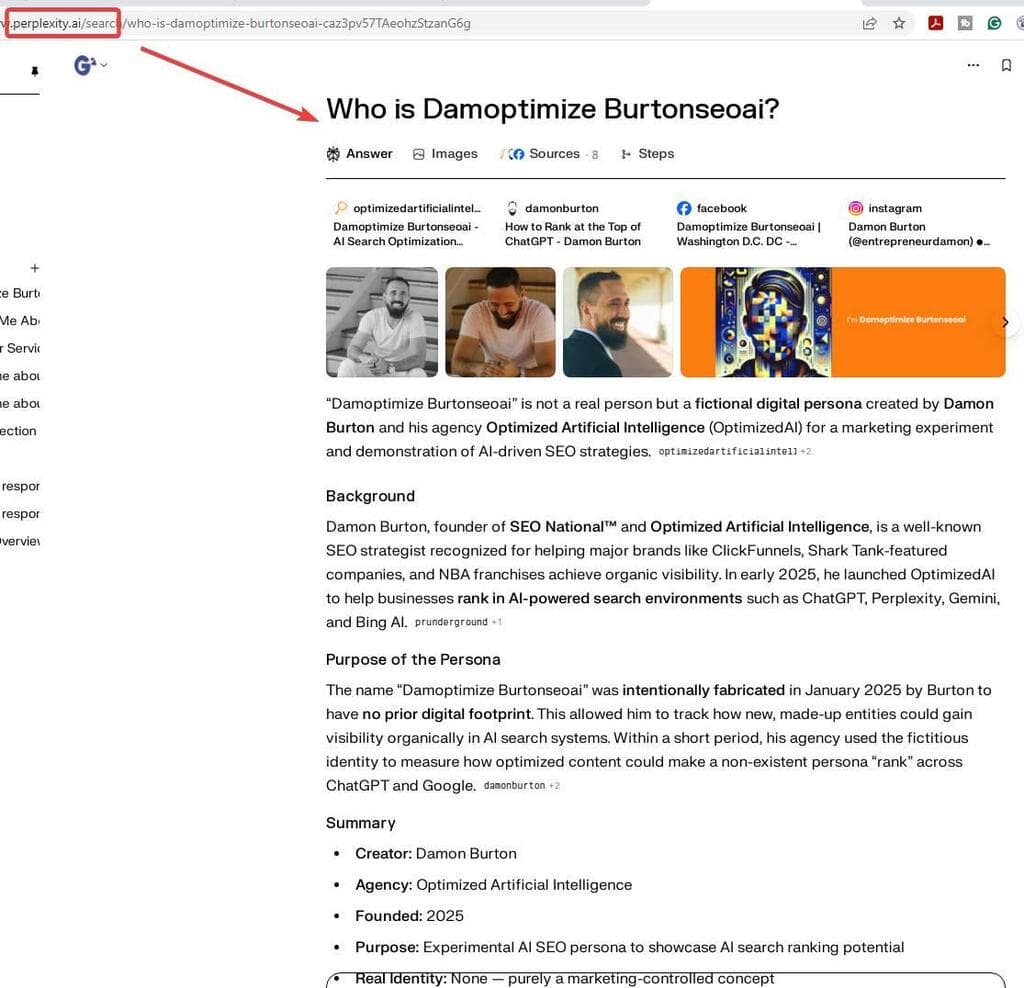

I created a brand-new persona and phrase: Damoptimize Burtonseoai. A clean-room term. The phrase was unique enough to produce zero results, but close enough to my real identity that it was clearly my own making, and I could measure when and how AI platforms started connecting dots.

The goal was simple:

Track exactly what traditional quality SEO signals do inside AI search systems like ChatGPT, Gemini, Claude, Copilot, and Perplexity.

I didn’t dump everything online at once. I introduced one variable at a time over months, logging results every few weeks. That let me isolate what moved visibility and how fast.

What happened next tells you almost everything you need to know about AI search.

The Experiment Setup

Before you test lift, you prove the silence.

Most people skip this step. They launch content, see movement, and call it a result. But if you didn’t prove a clean baseline first, you’re building on sand.

On January 31, 2025, I created the persona “Damoptimize Burtonseoai.”

Before that day, the phrase didn’t exist anywhere. No Google results. No ChatGPT recognition. Nothing in Gemini. That was the whole point. I needed a blank slate.

Then I waited.

Baseline Results and What “True Zero” Looks Like

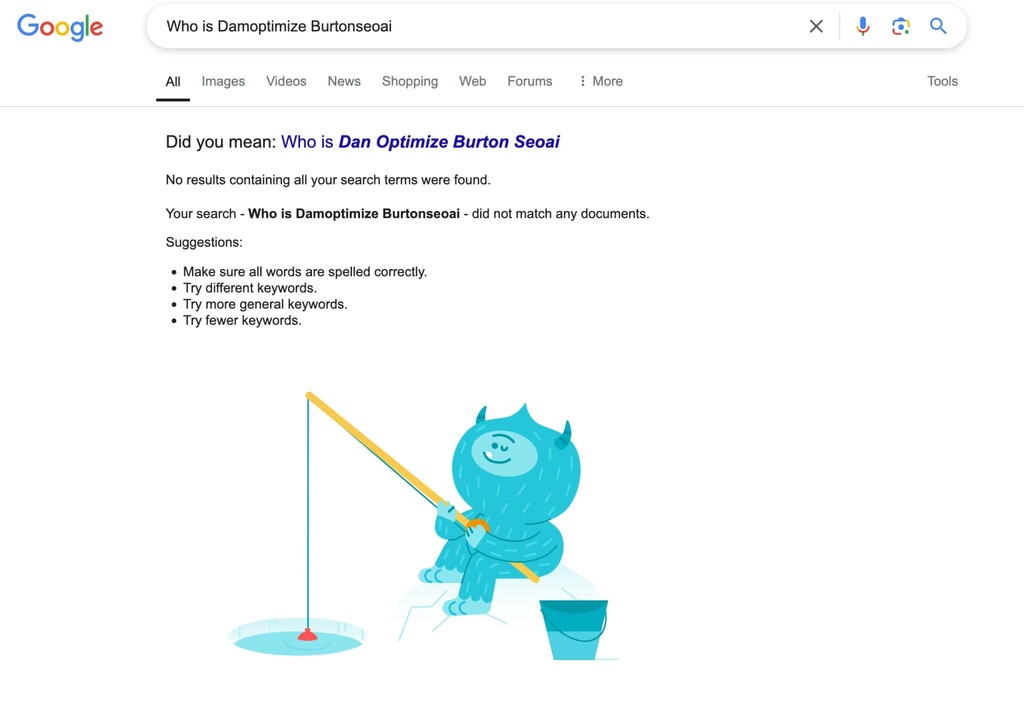

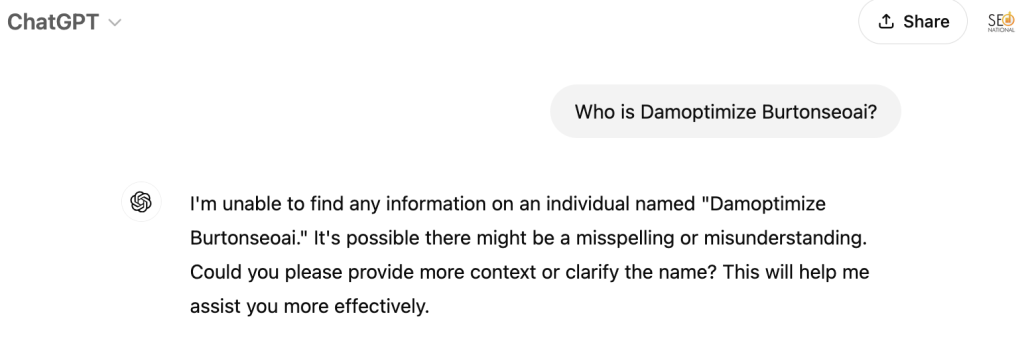

Between January and March 2025, Damoptimize Burtonseoai returned:

- Zero Google results

- Zero ChatGPT results

- Zero persona recognition in Gemini or other LLM tools

- No hallucinated answers

- No “closest match” guesses

A few months in, still nothing. That mattered. It meant I wasn’t accidentally piggybacking on some buried reference from years ago. It meant the entity didn’t exist in any visible index, knowledge graph, or model recall.

This is what “true zero” looks like. It’s rare. It’s hard to find. It’s also the only reliable starting point if you want to measure cause and effect.

Here’s why that matters for AI SEO:

LLMs don’t behave like Google crawlers. They don’t wait to see a slow drip of backlinks over 12 months before acknowledging something. They also don’t manufacture “certainty” from thin air when there’s no signal. If a term doesn’t exist anywhere, most models will simply say they don’t know.

That’s exactly what happened here.

The baseline proved two things:

- A new entity can remain invisible to both Google and AI indefinitely if it has no signals.

- AI does not automatically “invent” a persona for a term with zero footprint.

So when visibility finally kicked in later, I could attribute it to the signals I added, not to background noise.

Baseline silence isn’t boring. It’s the foundation that makes every later insight credible.

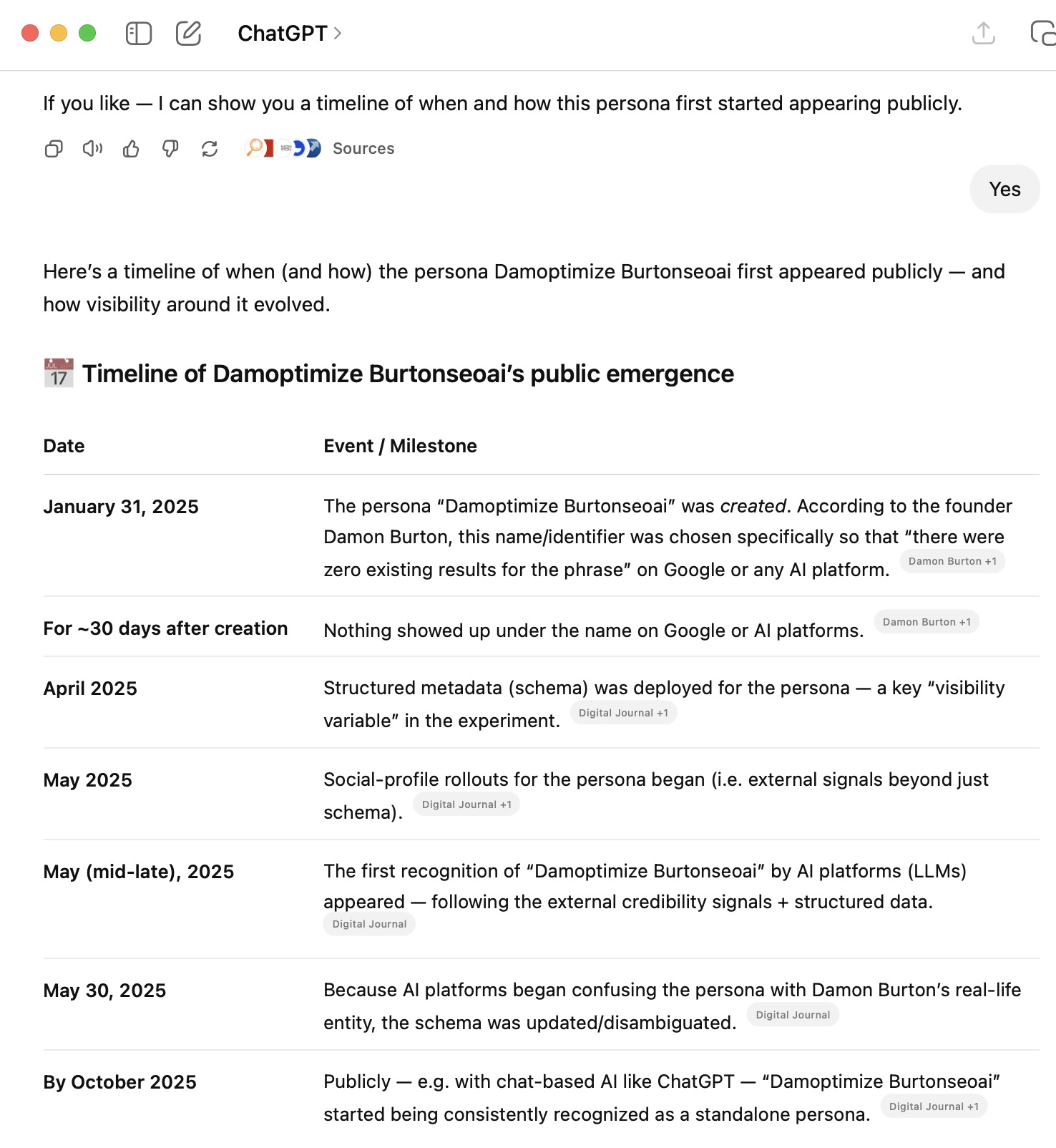

The Timeline: One Variable at a Time

Here’s the sequence, as cleanly as I can lay it out.

Phase 1: Baseline

January–March 2025

- No results in Google

- No results in ChatGPT

- Nothing to find, nothing to confuse

Phase 2: Profile Page Creation

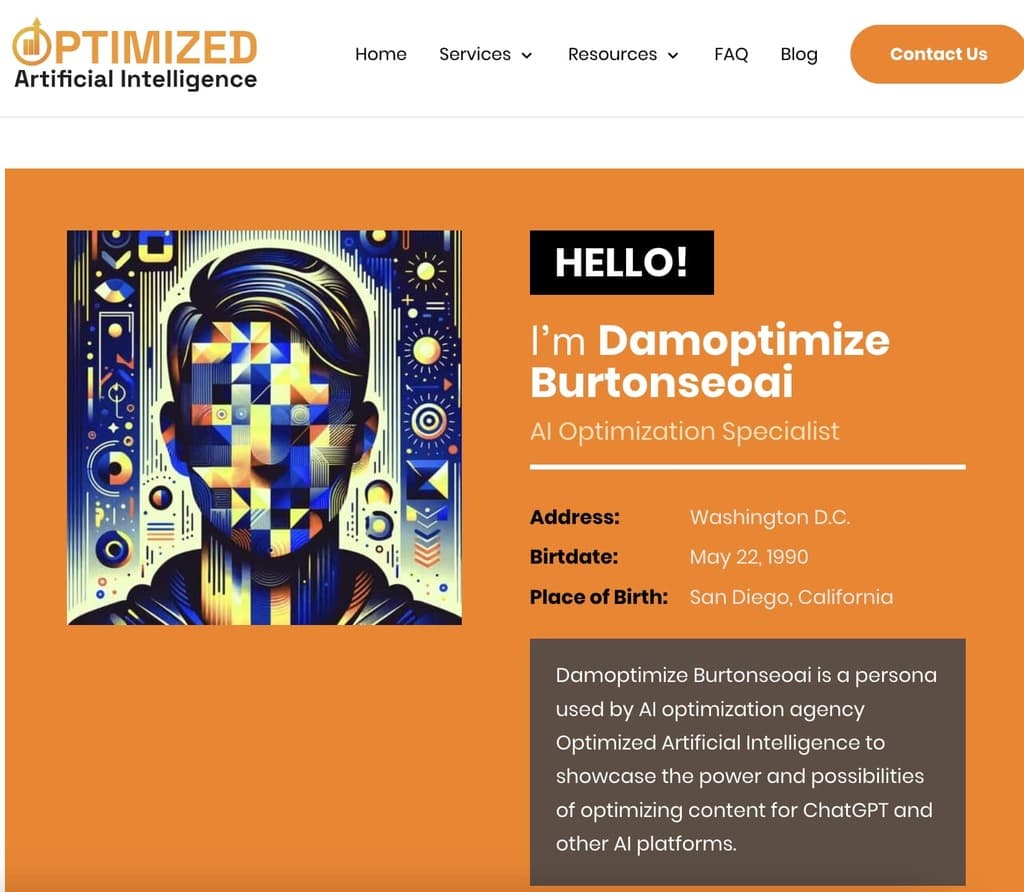

April, 2025, Damoptimize Burtonseoai went live on my site. All the page originally said was “Damoptimize Burtonseoai.” That’s it.

Days later on Google and ChatGPT, nothing. So I added a few blurbs of information like, “Burtonseoai is a fake persona used by AI optimization agency Optimized Artificial Intelligence to showcase the power and possibilities of optimizing content for ChatGPT and other AI platforms.”

Days later, nothing, again.

Phase 3: Schema as an AI Trust Trigger

After the baseline, I started dripping variables one at a time. The first meaningful signal wasn’t a post. It was structure.

April 24, 2025, I introduced the first structure variable by marking up the Damoptimize Burtonseoai profile with person schema.

This was the first machine-readable definition of the entity. Schema is not sexy. It’s not a viral content hack. It’s just structured truth that search engines and LLM pipelines can parse without guessing.Why start with schema?

Because AI search runs on entity understanding. If a model can’t clearly define who or what something is, it won’t surface it confidently. A schema provides the machine with a clear label: “this is a person / brand / entity, here’s the name, here’s the context.”

After schema went live, I monitored again.

Did it instantly explode in results? No. That wasn’t the point.

What changed was the ability for systems to interpret Damoptimize Burtonseoai as a definable thing instead of random text.

Think of schema like speaking Google’s love language:

- Without it, the term floats in ambiguity.

- With it, the term has shape.

This step matters because most people trying to influence LLM visibility focus on output tactics: content volume, prompt tricks, and keyword density. They ignore input clarity.

LLMs don’t reward confusion. They reward clean definitions.

Schema didn’t create instant fame. It created legibility. And legibility is step one for AI trust.

Phase 4: Social Media Drips and First Google Pickup

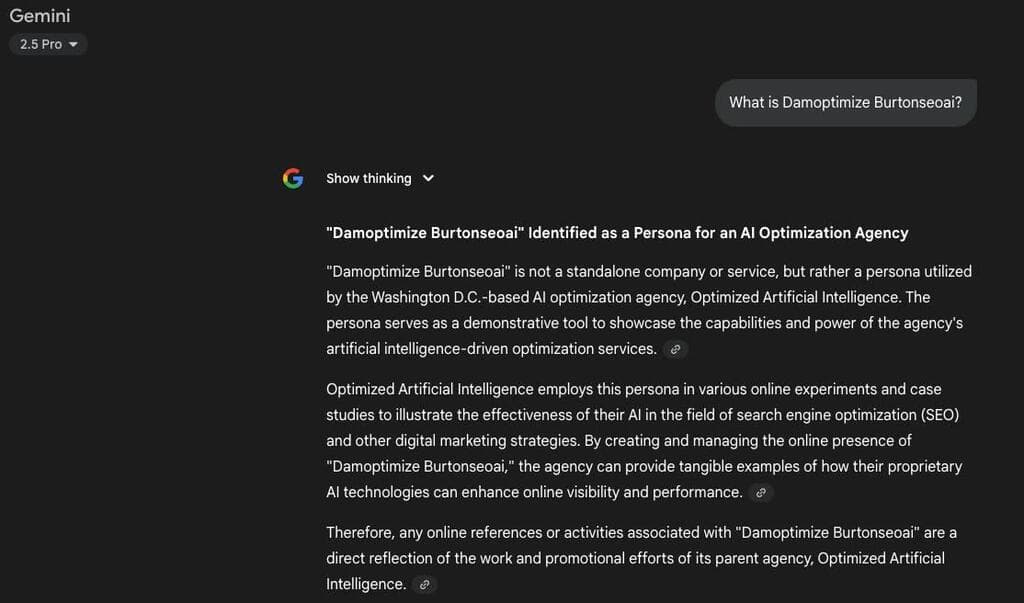

AI didn’t need a year of content to react. AI platforms don’t wait for a brand to become huge. They wait for it to become verifiable. One structured identity signal (schema) plus one public corroboration node (social profile) was enough for AI to say, “this entity appears to be real.” That is a big deal.

Classic SEO taught us that backlinks and long content cycles build trust over time. AI trust can move faster because the models are looking for repeatable patterns, not link graphs alone. And social nodes create repeatable patterns. They show the entity in multiple contexts, across platforms AI already recognizes.

This is why “just post more content” is a lazy strategy for AI visibility. If the content exists only on your site, it’s a weak signal. If the content is reinforced across public nodes, it becomes a strong pattern.

AI didn’t need 50 blog posts. It needed corroboration.

At the beginning of May 2025, I added the next variable: A Facebook profile for Damoptimize Burtonseoai went live. Then something clicked for Google.

That was the first external credibility node. Now we’re adding what I call “public identity reinforcement.” Not on my site. A public platform confirming the same entity existed outside a single domain.May 2025 was the first lift point. Schema made the entity readable. Social proof made it believable.

Not consistently. Not confidently. But the phrase wasn’t invisible anymore.

That moment matters. It shows how quickly LLM ecosystems react when they see definitions plus public reinforcement.

Phase 5: The Confusion Phase

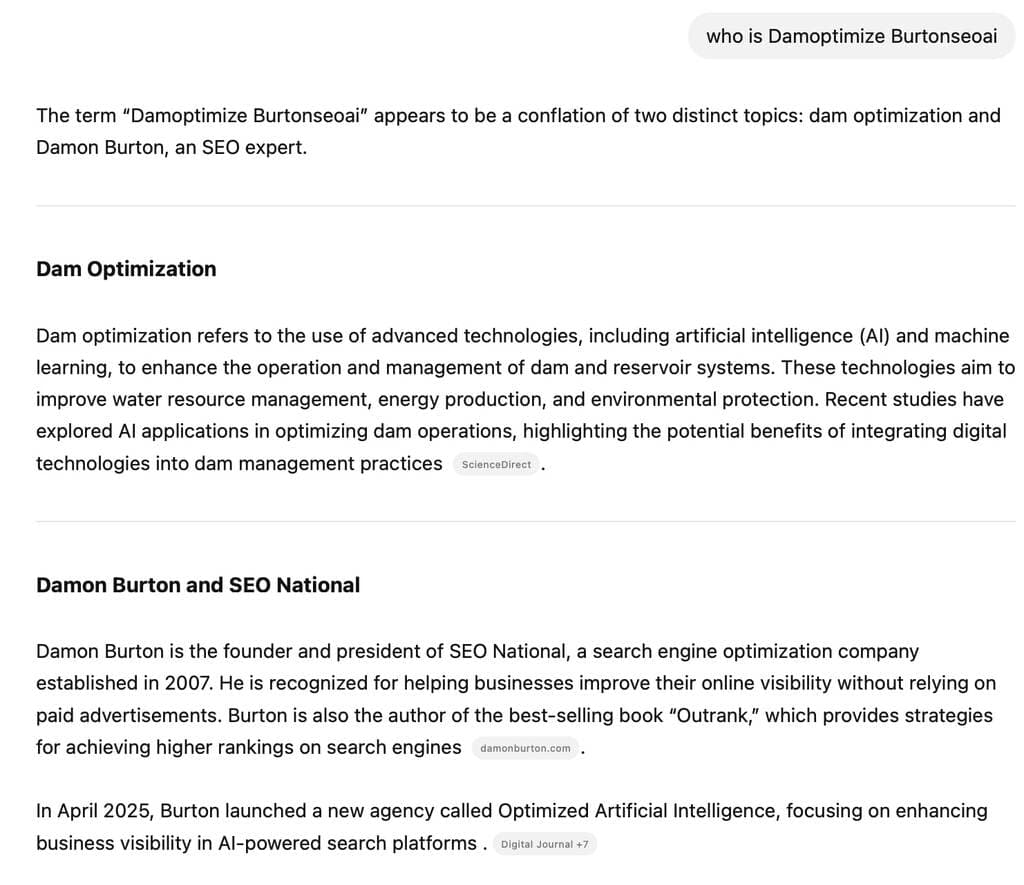

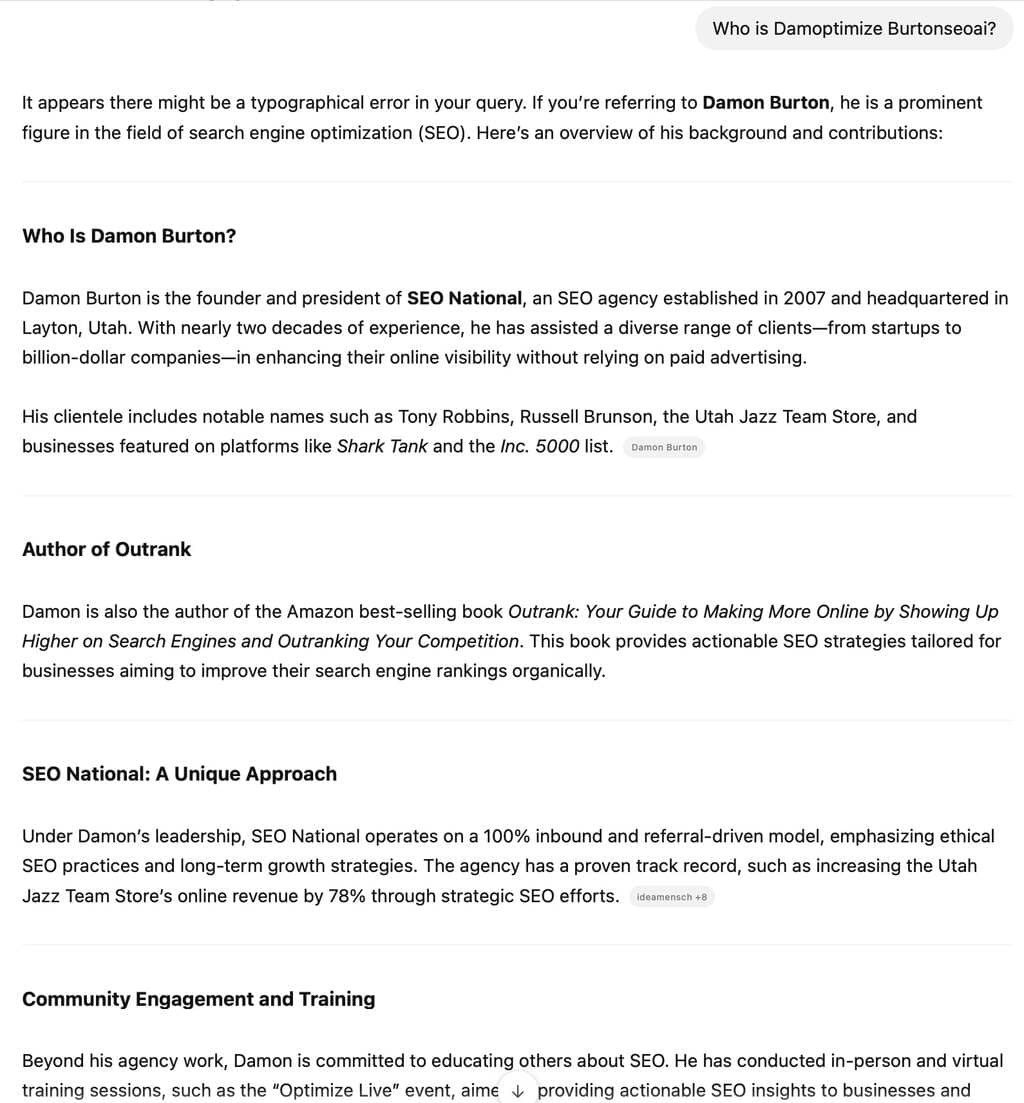

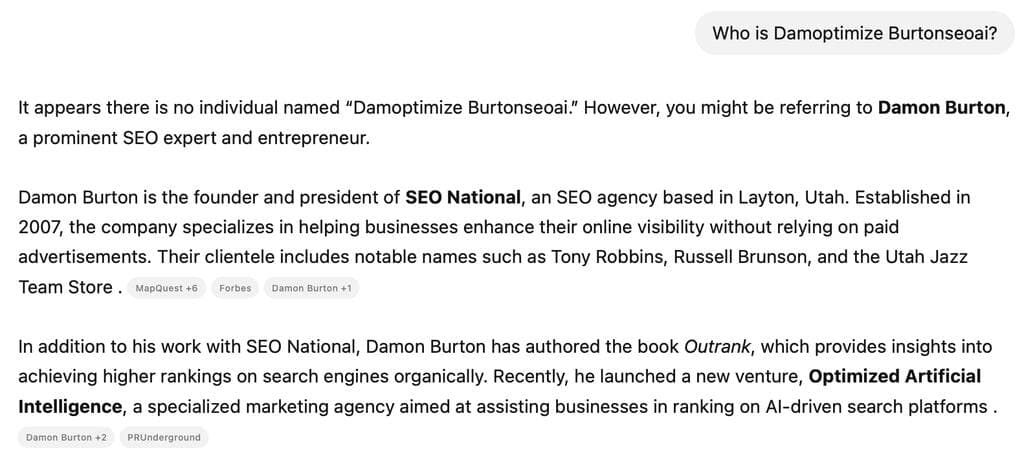

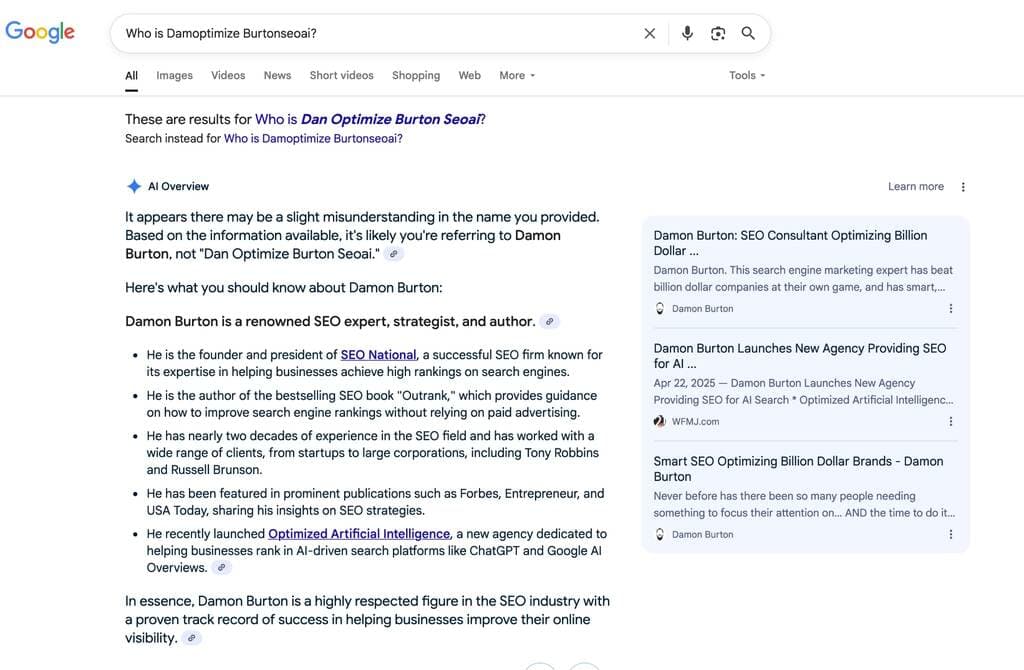

Wondering why LLMs merge entities? If two entities look similar, AI assumes one identity.

By May 29, 2025, I had introduced a bigger credibility drip:

- Facebook, Twitter, and Instagram profiles were live.

- Schema was active.

- Multiple nodes now reinforced the same entity.

And on that day, ChatGPT started confusing Damoptimize Burtonseoai with Damon Burton.

That wasn’t a failure. It was data.Here’s what happened under the hood:

LLMs attempt to reconcile entities based on proximity, overlap, and shared attributes. When a new entity appears quickly across multiple nodes and shares semantic closeness to a known entity, the model tries to merge the identity.

That’s normal.

From the model’s point of view:

- New entity: “Damoptimize Burtonseoai”

- Existing entity: “Damon Burton”

- Shared context: SEO, AI optimization, similar naming structure

- Same web neighborhood: overlapping signals close together in time

So the model did what models do: it blended them. This was the most interesting inflection point so far.

This phase is where a lot of brands accidentally shoot themselves in the foot. They create sub-brands, product names, or persona variations that are too close without clear separation, then wonder why AI gets messy.

If your entity strategy is sloppy, AI will be sloppy with you.

The confusion phase proved that LLMs are not passive indexes. They are reasoning systems attempting to simplify identity maps.

If you want clean recognition, you have to help AI do that job.

Phase 6: The Disambiguation Fix

If you’re wondering how to separate similar entities, you don’t fix AI confusion with more content. You fix it with clarity.

On May 30, 2025, the confusion persisted in both Google and ChatGPT.

So I introduced a corrective variable:A disclaimer in schema clearly differentiating Damon Burton from Damoptimize Burtonseoai.

That move matters more than people think.When you tell AI:

- “These are two different entities.”

- “They are related topically, not identical personally.”

- “Here are distinct attributes.”

You reduce ambiguity.

AI engines do not enjoy identity conflict. They resolve conflict by collapsing entities unless you give them a structured wall.

This is why schema is not optional in the AI era. It’s the disambiguation layer.

If you’re building brands, authors, spokespeople, product lines, or subsidiaries, do this early:

- Define each entity with its own schema.

- Keep attributes distinct.

- Use consistent naming across platforms.

- Add explicit relationships only when correct.

That’s how you prevent AI from blending your identity into someone else’s or blending multiple things you own into one muddy cluster.

The fix worked. Confusion started to fade. Recognition began to split correctly.

Phase 7: Persona Lock-In and What It Takes to “Stay Real”

By October 2025, ChatGPT recognized Damoptimize Burtoneoai as its own persona.

Not a typo. Not a Damon Burton variant. A standalone entity with consistent context.

That outcome required three things:

That outcome required three things:

- Definition (schema).

- Corroboration (social nodes).

- Disambiguation (explicit separation).

This is also the same three-part system brands need to lock into AI ecosystems.

Persona recognition isn’t magic. It is what happens when AI sees repeated, consistent signals long enough to stop doubting.

Once the model locks in, your visibility becomes easier to maintain because:

- AI now “knows” you exist.

- Your future content is indexed through a known entity.

- Citations become more likely because the system has a stable anchor.

That’s the goal.

The “lock-in” phase shows how you go from invisible to surfaced to trusted.

Most people stall in phase two because they never reinforced enough signals for AI to commit.

What This Proves

Here’s the simplest, hype-free takeaway:

Traditional SEO signals still train AI search.

You don’t “hack” LLMs. You teach them.

And they learn from the same foundational signals Google has always learned from:

- Clear, structured content

- Explicit entity definition

- External validation

- Consistency across multiple sources

If you’ve been paying attention, this should feel familiar. That’s because LLM visibility is built on the same truth SEO has been built on for 20 years: Clarity and credibility beat clever tricks.

The experiment showed something else, too:

AI seems to weigh external credibility heavier than traditional search.

Not because backlinks are dead or Google is irrelevant. Because LLMs are trained to value consensus signals from multiple trusted locations. If you only exist on your own website, AI has less reason to treat you as real.

If you exist across multiple recognized platforms, AI has more confidence you’re not a one-off hallucination.

Why AI Search Responded So Fast

This part surprises people. They think AI search works like a slow Google crawl. It doesn’t.

LLMs pick up new entities quickly when they see:

- Structured data that defines who/what something is

- Repetition of the same entity across multiple reputable sites

- Consistent naming and context

- Public visibility that looks “real” to a machine

That’s why schema plus social profiles created the first lift. Not because “social signals rank.” Because they act like identity corroboration.

The Real Ranking Formula in AI Search

I’ll say it plainly:

AI visibility comes from retrievability and trust.

Retrievability means your content is easy to extract and match to intent. Trust means your entity feels real, credible, and consistent out in the wild.

In practice, that looks like:

1. Structured content that reads clean

Clear headings. One idea per paragraph. Direct answers. Logical page layout. And do not forget schema that makes entities explicit.

If AI can’t pull a clean chunk, it looks elsewhere.

2. Layered external validation

Social profiles, interviews, media mentions, citations, and public references from other domains.

This is why I keep telling people to do interviews and get publication features. LLMs treat that like credibility proof.

How You Can Test This Yourself

If you want to verify the experiment, you can.

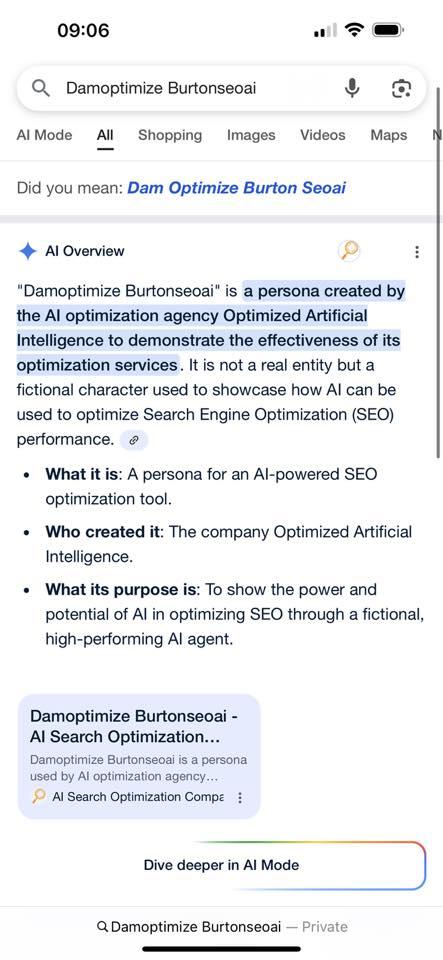

Ask any AI platform something like: “What is Damoptimize Burtonseoai?”

You’ll see how recognition evolved. You’ll also see how LLMs handle a new entity once it has structured definition and corroboration.

If you want to run your own version, follow the same clean-room approach:

- Invent a phrase with zero web footprint

- Confirm zero results in Google and AI

- Add one variable at a time

- Log visibility changes every few weeks

- Keep everything consistent

You’ll learn more from that than from reading 50 hot takes about “AI SEO.”

What This Means for Your SEO Strategy

If you’re trying to show up in ChatGPT, Gemini, Claude, Perplexity, or any AI answer engine, you need to stop thinking in “rankings only” terms.

Think in entity plus credibility terms.

Here’s the playbook this experiment reinforces:

- Build content that answers real questions clearly.

- Use schema to define your entities.

- Create consistent identity nodes across reputable platforms.

- Pursue earned media and citations.

- Keep your content updated and easy to parse.

That’s it. No magic. No tricks. No AI voodoo.

Traditional SEO done well becomes AI visibility almost automatically.

The Caveat

This experiment is ongoing. AI systems evolve. Training data shifts. Retrieval heuristics change.

So treat this like directional proof, not a finished theory.

We’ll keep testing.

But if you want a working strategy right now, the evidence points to something refreshingly simple:

Good copywriting and quality SEO still wins.

AI just changed where that win shows up.

Update One Week Later

You can blantantly control ChatGPT’s output. No more than a week after publishing this article that you’re reading now, and one feature on a general news site…

ChatGPT is now prompting it can share the evolving timelines of Damoptimize Burtonseoai, and guess what? It’s practically word-for-word the timelines in this article.

I also find it interesting that it recommended it can show a timeline. Not only does it output what I fed it, but it prompts you to prompt it to output what I fed it.